Tariq Saeedi

As I review the unfolding events of the past two weeks, one dimension of this conflict stands out with quiet but unmistakable clarity: the central role of artificial intelligence in shaping how strikes are selected, prioritized, and executed.

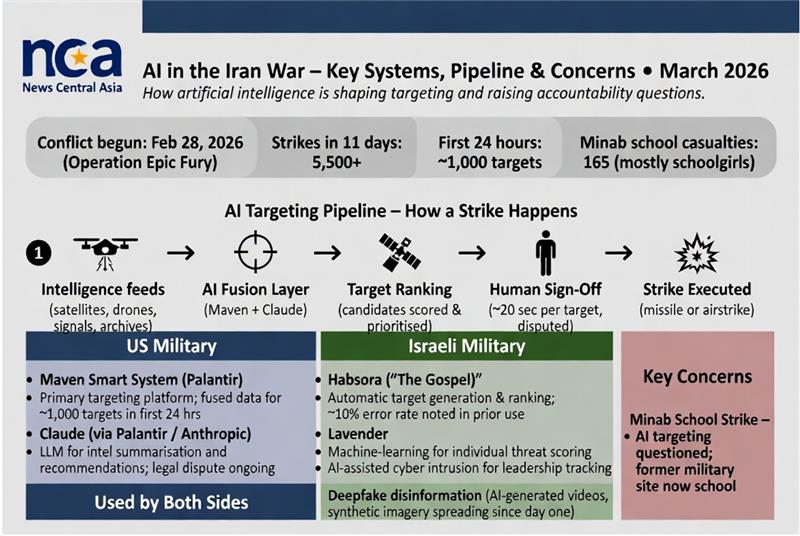

Operation Epic Fury, which began on February 28, 2026, saw more than 5,500 strikes in eleven days — roughly 1,000 of them in the first 24 hours alone.

Public reporting and official statements confirm that AI systems have been instrumental in achieving this pace, processing vast streams of intelligence far faster than traditional methods would allow.

The process follows a structured pipeline. Intelligence feeds from satellites, drones, signals, and archived data flow first into an AI fusion layer. There, systems such as Palantir’s Maven Smart System, augmented by Anthropic’s Claude large language model, synthesize and summarize the information. Targets are then ranked and prioritized before a human operator provides final sign-off — a step that, according to multiple accounts, can take as little as twenty seconds per recommendation. Only then does the strike proceed.

On the American side, the Maven Smart System has been the primary platform. It fuses satellite imagery, drone footage, and signals intelligence to generate and rank potential targets. Claude, embedded within Maven, assists in summarising complex data streams and producing actionable recommendations.

This combination reportedly enabled commanders to identify and strike roughly 1,000 Iranian targets in the opening day of the campaign.

Supporting infrastructure comes from Project Nimbus — the cloud contract between the Israeli government and AWS/Google Cloud — which provides the underlying computing power and general AI tooling for both Israeli and, in certain cases, American operations.

Israeli systems operate in parallel. Habsora, known internally as “The Gospel,” serves as the main targeting engine, automatically generating and ranking large volumes of strike candidates at a speed that earlier conflicts could not match.

Lavender, a machine-learning tool originally developed for individual threat scoring, has also been assessed for use against Iranian targets.

In one notable instance, Israeli intelligence reportedly gained access to Tehran’s traffic camera network, using AI-assisted processing to track movements and contribute to the strike that eliminated Supreme Leader Ayatollah Ali Khamenei.

These capabilities have delivered undeniable operational speed. Yet they have also raised measured questions about reliability and oversight.

The tragic strike on the Shajareh Tayyebeh primary school in Minab, which killed an estimated 165 people — the majority of them schoolgirls aged 7 to 12 — is now under Pentagon review. The site had previously served as a military facility; reports suggest the AI recommendation may not have fully reflected its change in use.

Concerns about “automation bias” — the tendency of operators under time pressure to accept AI suggestions too readily — have surfaced in congressional and media discussions, alongside references to a roughly 10 percent error rate observed in earlier deployments of similar Israeli systems in Gaza.

Both sides have also employed AI-generated deepfakes and synthetic imagery to shape narratives. Fabricated videos and manipulated photographs of strikes, explosions, and civilian scenes have circulated widely on social media since the first day of the conflict, complicating public understanding and adding another layer to the information environment.

Regarding other major AI developers, OpenAI has entered a separate agreement with the Department of Defense for classified systems. The company has emphasized additional guardrails in its contract, including prohibitions on certain high-stakes automated decisions.

Unlike Anthropic’s Claude, however, OpenAI’s models have not been publicly identified as part of the core targeting pipeline in this campaign. Their role appears limited to broader infrastructure or support functions, consistent with the firm’s stated policies against direct involvement in lethal autonomous systems.

What emerges from these developments is a conflict in which AI has compressed the “kill chain” dramatically while leaving human sign-off formally intact. The technology has not removed the need for judgment; it has simply changed the tempo at which that judgment must be exercised.

As the campaign continues, the balance between speed, accuracy, and accountability remains one of the defining — and most closely watched — features of this war. /// nCa, 16 Marçh 2026